Jason Hiner

Jason Hiner is the Chief Content Officer and Editor-in-Chief of The Deep View. He's an award-winning journalist who has spent his career analyzing how tech has reshaped the world. He covered AI for over a decade at ZDNET and CNET and watched it evolve from research labs to enterprise infrastructure to a daily reality for over a billion people. He came to The Deep View for the opportunity to cover AI every day and build a next-generation media company. For Jason's real-time takes on AI, you can find him on X/Twitter at x.com/jasonhiner.

Articles

Apple’s M5 MacBooks double down on AI builders

Apple may be playing catch-up when it comes to deploying AI features across its product line, but it's still the frontrunner when it comes to hardware for AI builders.

On Tuesday, Apple unveiled its upgraded lineup of MacBook Pro laptops running on new M5 Pro and M5 Max Apple silicon chips. While these machines are still great for Apple's traditional crowd of video editors, graphic designers, and other creatives, it's clear that Apple is now architecting these machines primarily for developers and AI builders.

The big upgrades are almost all tailored around improving performance on AI workloads and running AI models locally. These machines also got a price increase, which could be partially triggered by the RAMmageddon memory crisis in the tech industry right now.

Here's a quick recap of the most important

- The new MacBook Pros can comfortably run local models up to about 40B parameters and can stretch up to about 90B parameters for most models, with some limitations.

- Apple has added Neural Accelerators to every GPU core and reports that it helps deliver over 4x peak GPU performance increase for AI workloads compared to the last-generation M4 Pro/Max chips (a big leap for one generation).

- Apple launched its new Fusion Architecture that combines two silicon dies into a single system-on-chip, integrating CPU, GPU, Neural Engine, memory controller, and Thunderbolt 5 to drive higher performance and efficiency. This architecture optimizes for sustained AI workloads like training that AI researchers would have previously been limited to running on desktops.

The memory performance upgrade has also drawn positive vibes in the AI ecosystem:https://x.com/atiorh/status/2028851509245661488

The base prices of MacBook Pros have jumped by $200 for the M5 Pro and $400 for the M5 Max. The M5 Pro starts at $2,199 for the 14-inch and $2,699 for the 16-inch. The M5 Max starts at $3,599 for the 14-inch and $3,899 for the 16-inch. Pre-orders start March 4 and March 11.

Our Deeper View

Apple's MacBook Pro machines are the laptops of choice among nearly all the teams I know who are building in the AI space. This is evidenced by the fact that products like ChatGPT Atlas and Claude Cowork launched as Mac-only at first, even though Windows has a much larger user base. And Apple doubling down on features that make their laptops even more powerful for running AI workloads, running models on-device, and training models locally is only going to make them more popular with AI builders. Of course, the question remains: When will Apple move beyond being happy making the hardware that enables the people creating the AI revolution to launching its own AI features and products that are vertically integrated in a uniquely Apple way?

Claude becomes No. 1 app hours after Pentagon ban

In standing up to the US government, Anthropic has built up so much goodwill that people are chalking “GOD LOVES ANTHROPIC” on the sidewalks outside of its San Francisco HQ. It also gave OpenAI an opening it needed.

After Anthropic stood firm in its refusal to bend on its two conditions for using its AI — no mass surveillance of US citizens and no fully autonomous weapons — the Pentagon and the Trump Administration went to DEFCON 5 on Friday. They designated the company a supply chain risk, a title typically reserved for adversaries. President Donald Trump has also directed every government organization to “immediately cease” using Anthropic’s technology.

“The Terms of Service of Anthropic’s defective altruism will never outweigh the safety, the readiness, or the lives of American troops on the battlefield,” Secretary of War Pete Hegseth said in a post on X.

While facing the fallout of losing hundreds of millions in revenue, Anthropic has seen a massive outpouring of support, both in the AI community and beyond. In the hours after the government ban, its Claude app skyrocketed to the top of Apple's App Store, dethroning ChatGPT in the No. 1 spot. Anthropic employees, meanwhile, broadly took to X to praise their employer. AI leaders like Ilya Sutskever have commended the company for its stance, and the app has even earned the public admiration of celebrities who have nothing to do with AI, like Katy Perry.

In an interview with CBS News, CEO Dario Amodei called disagreeing with the government the “most American thing in the world.”

Anthropic vowed to take the Pentagon to court over the extent of the supply chain risk designation. In a statement on Friday, the company said the designation is both "legally unsound and set a dangerous precedent” for companies to negotiate with the government.

In the meantime, OpenAI seized the opportunity to sign a contract with the Department of War to use its models instead.

While OpenAI claimed that its agreement with the Pentagon upholds its "redlines" over domestic surveillance and autonomous weapons and "has more guardrails than any previous agreement for classified AI deployments," the reality is a little more nuanced.

Anthropic sought to preserve explicit restrictions barring the use of its models for mass surveillance of U.S. citizens and fully autonomous weapons. The Pentagon, which often structures contracts around broad “all lawful purposes” language, reportedly preferred not to carve out those exceptions. Anthropic declined to move forward under those terms. OpenAI later signed a Department of War agreement structured around the standard federal contracting language. OpenAI CEO Sam Altman defended the stance, saying, "I do not believe unelected leaders of private companies should have as much power as our democratically elected government."

Our Deeper View

While all the attention is certainly a silver lining for Anthropic, it was also its moment of truth to uphold the company's founding principles of AI responsibility, safety, and ethics. By not bending to the Pentagon’s will, especially given that human rights and lives might be at stake, the company was keeping its promises. Its refusal to capitulate may also put pressure on rivals. An open letter titled “We Will Not Be Divided” began circulating on social media and has since garnered 537 signatures from Google employees and 89 from OpenAI employees. By holding to its stated objectives and incurring the wrath of the federal government, Anthropic has effectively made itself a martyr.

Using AI to fix job destruction, skills, and hiring

In a labor market being rewired by AI, CodeSignal is betting that skills, not resumes, will decide who thrives.

For this episode of The Deep View: Conversations, I talked with Tigran Sloyan, CEO and co-founder of CodeSignal, the company building a new standard for hiring and career mobility in the age of AI.

CodeSignal’s mission starts with a simple but painful truth: resumes and interviews are a flawed way to hire talent. Countless candidates have the skills to thrive in high-paying tech roles but never get a fair shot, while others with polished credentials sometimes land jobs they’re not prepared to do.

CodeSignal is flipping that equation with skills-based assessments that help employers discover candidates with real ability, and a free learning platform that helps candidates level up for the next opportunity.

In my conversation with Tigran, we talked about:

- Why resumes haven’t meaningfully changed in 100 years, and why it's breaking hiring

- How CodeSignal measures skills, and why simulation beats multiple-choice

- What AI unlocks for assessing non-technical roles such as sales and support

- The dark side of AI: what CodeSignal’s research shows about cheating attempts

- Why entry-level jobs are turning into tasks, and what that means for training

- How CodeSignal makes free learning content work economically

- The future of re-skilling at scale, and why AI tutoring changes everything

We also dig into what’s changing fast right now: the rise of AI-assisted work, the surge in fraud in hiring assessments, and why foundational skills still matter even when AI can do the task.

Tigran shares his background from Armenia to MIT to Google, his most contrarian leadership advice, and the AI tool he'd recommend you start using every day. If you want to understand how AI is being used to fix the problems that AI is causing in the job market, this is the podcast for you.

🎧 Listen in your favorite podcast player

Subscribe to The Deep View: Conversations podcast in your favorite podcast player for more unique conversations with the brightest minds solving the biggest challenges in AI. You can also subscribe on YouTube.

And if you want to follow my updates on the AI space in real-time, you can find me on X/Twitter at x.com/jasonhiner.

For $100K jobs, 50% now require AI skills

If you want a high-paying job, there aren't many places left to hide from AI.

According to internal data from the U.S.-based job site Ladders, as seen by The Deep View, the number of knowledge worker job listings requiring AI skills has now skyrocketed to nearly half of all roles.

"We found in our data that about 50% of all high-paying jobs at the $100,000-plus level now include some type of requirement for AI literacy," said Marc Cenedella, CEO of Ladders, in an exclusive interview with The Deep View.

That 50% with AI requirements is up from 20% in 2021, when most AI requirements focused on machine learning, deep learning, automation and big data.

Ladders, formerly TheLadders.com, launched in 2003, specializing in white-collar jobs paying $100,000 and above. This data comes from a pool of 1.1 million white-collar jobs analyzed, though the site reviews 72 million job listings a year before curating them down to a select number of the highest-quality jobs that make their list.

Here are more details from the company's internal research on AI in job listings:

- For executive roles, 45% now require AI skills

- Across all of the different roles and industries, at least 40% of the job listings now contain AI requirements

- Other specific roles where at least 45-50% of the jobs listed now require AI skills include Data, Finance, Design, Product, Software Engineering, and HR.

AI is also impacting the process of finding and landing the best jobs, and not always in a good way. Generative AI has made it easy for job seekers to quickly create personalized cover letters and resumes. But it may be secretly torpedoing candidates' chances at the best jobs, and not for the reasons they might think.

"When it comes to writing your resume, AI will give you an exactly average, typical resume, which is not what you want," said Cenedella. "You want one that's going to stand out and help you get the job. So for job seekers, it's confusing, because they'll use [AI] and it'll produce something that is extremely [typical] and reads well, but it's not actually helping them."

Our Deeper View

The fact that 50% of jobs now require AI skills doesn't tell the whole story. "When you actually read through job postings, you see that it's moved from a familiarity prerequisite,” said Cenedella, “[to where] you're going to be expected to have this knowledge within your purview." In other words, you now need to show that you know how to put the AI skills to work. And the only way you're going to do that is to actually use the technology, make mistakes with it, and figure out where it does and doesn’t make sense to use it in your daily routines. But with the technology moving so quickly, keeping up with the latest developments is a job in and of itself. So if you've been waiting to get started, now might be the time. If you want to follow my latest takes on the AI space in real-time, you can find me on X/Twitter at x.com/jasonhiner.

Perplexity may have built a better OpenClaw

Claude Code and OpenClaw have taken 2026 by storm by offering the first glimpses of personal AI agents. Perplexity just unveiled an agent that could prove to be more versatile and easier to use.

On Wednesday, the AI search firm launched Perplexity Computer, which it calls "a general-purpose digital worker that operates the same interfaces you do" and "a system that creates and executes entire workflows, capable of running for hours or even months."

It's live starting today at perplexity.ai/computer. It's only available on the web for now and not in the Perplexity app. It's also only available to Perplexity Max subscribers ($200/month) to start, while Perplexity says it will roll out to Pro ($20/month) and Enterprise subscribers in the coming weeks. To access it from perplexity.ai, you'll simply click the "Computer" icon/link in the upper left corner under the main Perplexity icon.

- Perplexity Computer coordinates with tools, files, personal context, various AI models, deep research on the open web, agentic web access, coding capabilities, and file creation.

- It draws from 19 models, open-source and proprietary, from all the leading labs. At the start, it "uses Opus 4.6 for orchestration and coding tasks, Gemini for deep research, Nano Banana for images, Veo 3.1 for video, Grok for speed in lightweight tasks, and ChatGPT 5.2 for long-context recall and wide search," according to Perplexity.

- The agent runs into a secure development sandbox.

- Perplexity has been using the agent internally since January and reports that its employees have used it to rapidly publish engineering documentation, build a 4,000-row spreadsheet overnight that would have normally taken a week, and used it to create websites, dashboards, applications, analysis, and visualizations.

- Because agents can rack up token costs so quickly, Perplexity has introduced per-token billing for consumers for the first time. Max users get 10,000 tokens as part of their plans and Perplexity is giving them an extra 20,000 tokens for the launch of Perplexity Computer so they can kick the tires on it.

Our Deeper View

There are two aspects of Perplexity Computer that could make a breakthrough product. The first is the orchestration of various models that are best-in-class at different functions. The second is the fact that you can tell Perplexity Computer the outcome you're looking to achieve, and then it will break the work up into various agents and sub-agents — based on the best capabilities of the various models — and then carry out and coordinate the work while the various agents manage their tasks simultaneously. I have a Perplexity Max subscription and I'll be testing it out and reporting back. You can also follow me on Twitter/X at x.com/jasonhiner, where I'll be posting updates on my experience with Perplexity Computer.

Can an AI agent become your Iron Man suit?

The idea of an AI Chief of Staff has quickly gained currency as AI agents have had their moment in early 2026. And at the pace the AI industry is moving right now, it's no surprise that the concept now has an official product.

On Wednesday, Quill launched Quilliam, its "Chief of AI Staff" with the purpose of powering up knowledge workers rather than replacing them and doing it in a way that can preserve security, sovereignty, and localization of data. Previously known as Quill Meetings and a competitor of products such as Granola and Fireflies, the company is transforming itself around making meetings more actionable with agentic AI.

At the same time, Quill announced a $6.5 million seed funding round and a new COO, Yacob Berhane, to pursue the new mission. The Deep View spoke with both Berhane and CEO Michael Daugherty about the launch of their Chief of AI Staff.

Here's what they highlighted it can do:

- Turn meeting action items into concrete actions: Automatically create or update project management tickets, Notion docs, and other systems of record, showing you a high‑level plan and then executing after you click approve.

- Automate follow‑through beyond meetings: It can draft emails, memos, and summaries tailored to each recipient or use case, such as VC rejection emails, internal investment memos, or a recap email from a parent‑teacher conference. So you start with decision‑making, not recaps.

- Use your entire meeting history as context: It lets you query across all past calls (e.g., “Catch me up on the last call” or “Summarize top security requests from my last three meetings”), and then it can spin those insights into structured work.

- Keep you present in meetings while capturing what matters: It lets you mark highlights and take screenshots in real time; those cues are used to personalize notes and pull out examples you were thinking about, even if you didn’t fully verbalize them in the meeting.

- Run privately on your own machine, even fully offline: The agent records and stores audio and transcripts locally, can enforce strict deletion policies, and can run against local models (e.g., OpenAI's GPT‑OSS 20B) and with Wi‑Fi off, so no meeting data has to leave the device.

Our Deeper View

In our discussion, Daugherty emphasized two different paradigms for agents. He said, “You either end up with a Waymo car, where you have an agent operating on its own… or you end up with an Iron Man suit where you are responsible for the outputs, you are actually in control, but it's making you much stronger and able to do a lot more." Daughtery portrayed Quill's agent as the Iron Man suit. That's a metaphor that's going to make a lot more professionals and enterprises comfortable. And, taking action on your meeting notes is a great place for AI agents to get started in clawing back time for workers. Naturally, this will have the biggest impact on people who spend at least half their day in meetings, rather than on individual contributors who are heads-down all day working on projects. To get my latest takes on the AI space in real-time, you can find me on Twitter/X at x.com/jasonhiner.

The 2028 intelligence crisis, and its antidote

AI could ruin everything – at least according to one recent report. But don't base your future plans on it just yet.

If the current AI boom succeeds, it could completely crash the global economy, says "The 2028 Global Intelligence Crisis" report, also known as the "CitriniResearch Macro Memo from June 2028," which has gone viral in the last 24 hours.

The authors of the report claim it's not "AI doomer fan-fiction," but a look at left-tail risks in AI that are currently going unexplored. Left-tail risks are a data science term for low-probability, high-impact negative outcomes. So, before we dive into the list of the catastrophic outcomes from this report, keep in mind that these are not predictions, but worst-case scenarios.

Here's what the report warns against during the 2026-2028 timeframe:

- Reflexive AI adoption: Agentic solutions reach widespread enterprise adoption, and companies massively cut white-collar jobs and invest in more AI solutions.

- "Ghost GDP" distortions: Productivity soars on paper, but income shifts from human labor to compute. So while GDP looks strong, consumer spending starts to collapse.

- Intelligence displacement spiral: When white-collar layoffs spread, high earners pull back discretionary spending and draw down their savings.

- Private credit and ARR contagion: AI undercuts the economics of SaaS companies that make up a big chunk of public markets. When recurring revenue erodes, it causes a chain of events that leads to defaults, regulatory scrutiny, and stress to the financial system.

- Prime mortgage fragility: Mortgage holders in tech-heavy metros (San Francisco, Seattle, Austin) begin to default on loans, adding further downward pressure on the financial system.

- Policy gap vs. structural shock: Cutting interest rates doesn't work to stimulate the economy where there's large-scale labor displacement. Fixing the economy requires a bipartisan structural change to policies, such as AI compute taxes and public claims on massive profits from AI advances — and bipartisanism fails to emerge to fill the policy gap.

Our Deeper View

It's wise to consider these worst-case scenarios. Having them in mind can allow leaders, boards of public companies, and public officials to identify early warning signs and act to prevent the worst outcomes. And let's also keep in mind that there is far more optimistic research on the other end of the spectrum. For example, in its annual 2026 Big Ideas research, ARK Invest forecasted that the convergence of trends that include AI, genomics, robotics and energy will lead to a "step change in real GDP growth" that will result in 7.3% real GDP expansion in 2030. That's far above the 3.1% forecasted by the IMF, and likely overly-optimistic. The reality is likely somewhere between these two extremes, but they also paint a picture of the uncertainty and the massive risk-versus-reward possibilities engendered by AI.

OpenAI’s 6-device push reveals a frontrunner

OpenAI is racing headlong into becoming a hardware company, led by former Apple employees Jony Ive, Evans Hanke, Tang Tan, and others. Despite the Apple pedigree, the OpenAI hardware team appears to be operating much more like a Silicon Valley software or AI company by moving fast and trying a lot of different things.

A new report from The Information expanded the number of devices that OpenAI is prototyping. In fact, the report mentioned three new devices that I haven't seen reported anywhere else.

The list of reported devices from OpenAI now includes:

- Smart glasses: These would presumably be similar to Meta Ray-Bans and forthcoming products from Google, Samsung, and Apple, but won't arrive until 2028. This was the most surprising of the three newly reported devices since Sam Altman had previously stated that the special device OpenAI was working on with Jony Ive was not a pair of glasses.

- Smart speaker: This is another of the newly reported devices and would compete with market-leading Amazon Echo speakers, which have been quite popular but are very limited in capabilities and are facing a five-year decline in sales.

- Smart lamp: The last of the three newly reported devices, this would presumably be a smart speaker built into a lamp. Perhaps it might also tie into the recently announced ChatGPT Health and track your sleep.

- Smart earbud: More recently, reports have centered on OpenAI's Jony Ive device morphing into a smart earbud (or earbuds). The Foxconn supply chain leak on this one quoted a release date for this September. It still feels like the most useful and the most imminent.

- Smart pin: This smart pin sounds quite like the poorly received Humane AI pin. I suspect that OpenAI may have pivoted away from this device to the earbud mentioned above. However, Apple has also reportedly been working on an AI pin, so OpenAI might still be considering the form factor as well.

- Smart pen: Not to be left out, OpenAI has also reportedly been working on an AI smart pen. Like the earbud, this device has been tied to supply chain leaks connected to Foxconn. This one would presumably feed your handwritten notes in ChatGPT to add to voice and typed inputs. We can also imagine this device could have a microphone and/or camera to make it multimodal.

The biggest thing these OpenAI devices would have going for them — as much as the device aesthetics from the former Apple aficionados — is ChatGPT Voice (formerly Voice Mode). This feature isn't often discussed, but it's far more capable and usable than Siri or Alexa. I'd even give it a slight edge over Google's Ask Gemini (formerly Google Assistant), which is the only voice mode currently in its league.

Our Deeper View

Of all six of these reported devices, I still consider the AI smart earbud the most promising in the short term. It would likely be moderate in price, it's a form factor most people already use, and even just having ChatGPT Voice at the ready would be a win. When we polled The Deep View audience earlier this year about buying an AI device from OpenAI, 43% said they would. So the brand still has work to do in winning over consumers. Also keep in mind that companies regularly leak reports like this to the press as trial balloons to gauge which devices draw the most buzz from consumers before deciding whether to actually manufacture them. So I predict we will not see all six of these devices come to market.

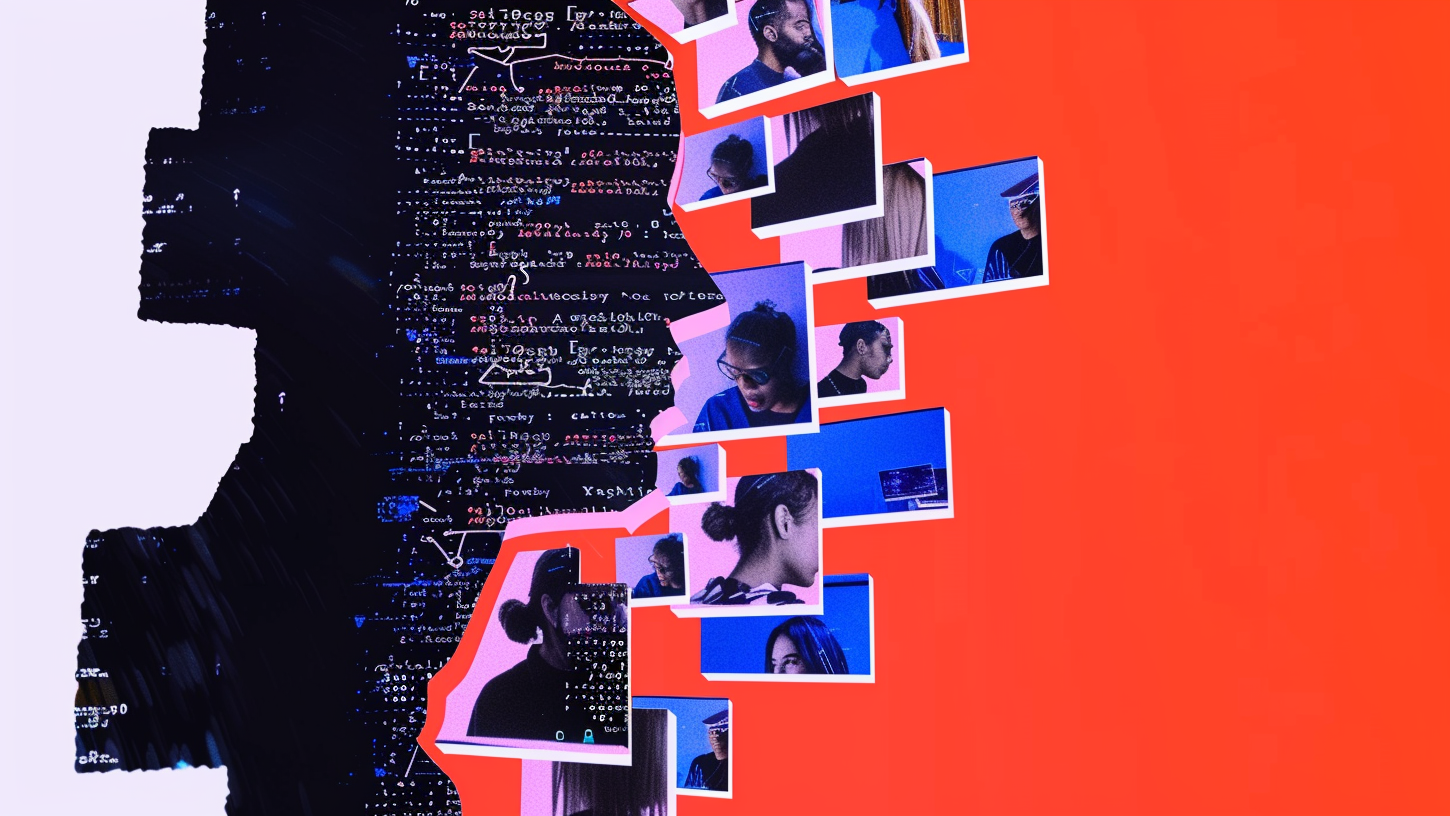

How perception itself became hackable

In the latest episode of The Deep View Conversations, I talked with Wasim Khaled, CEO of Blackbird AI, to explore a provocative idea: What happens when reality itself becomes hackable?

Long before generative AI went mainstream, Wasim and his cofounder launched Blackbird to tackle disinformation and narrative manipulation. Their thesis was bold: that part of modern cybersecurity conflict had shifted from infrastructure to information, from networks to narratives.

It turned out to be prescient.

As AI supercharges the speed, scale, and realism of malicious content — from deepfakes to coordinated influence campaigns — Blackbird has emerged as the leader in combating narrative attacks. In fact, Gartner recently named Blackbird the company to beat in disinformation narrative intelligence in its report on the AI Vendor Race.

In our conversation, we explore:

- What “narrative attacks” really are and why they’re so hard to detect

- How AI has fundamentally changed the disinformation battlefield

- Reactive vs. proactive defense strategies in cybersecurity

- How Blackbird evolved from a lab experiment into a national security player

- Why leaders relying on chatbots instead of AI agents are already falling behind

Wasim also shares how he optimizes his time for maximum leverage, and offers his advice for founders navigating fast-moving technology shifts.

If you care about cybersecurity, AI, information warfare, or the future of leadership in the age of intelligent agents, this is a conversation you'll want to hear.

🎧 Listen in your favorite podcast player

Subscribe to The Deep View: Conversations podcast in your favorite podcast player for more unique conversations with the brightest minds solving the biggest challenges in AI. You can also subscribe on YouTube.

or get it straight to your inbox, for free!

Get our free, daily newsletter that makes you smarter about AI. Read by 750,000+ from Google, Meta, Microsoft, a16z and more.